NVIDIA AI releases Orchestrator-8B: a controller trained with reinforcement learning for efficient tool and model selection

How do AI systems learn to choose the right model or tool for each step of a task, rather than always relying on one large model to do everything? Released by NVIDIA researchers tool orchestraa new way to train small language models to act as coordinators – the “brains” of agents using heterogeneous tools

From single model agent to orchestration strategy

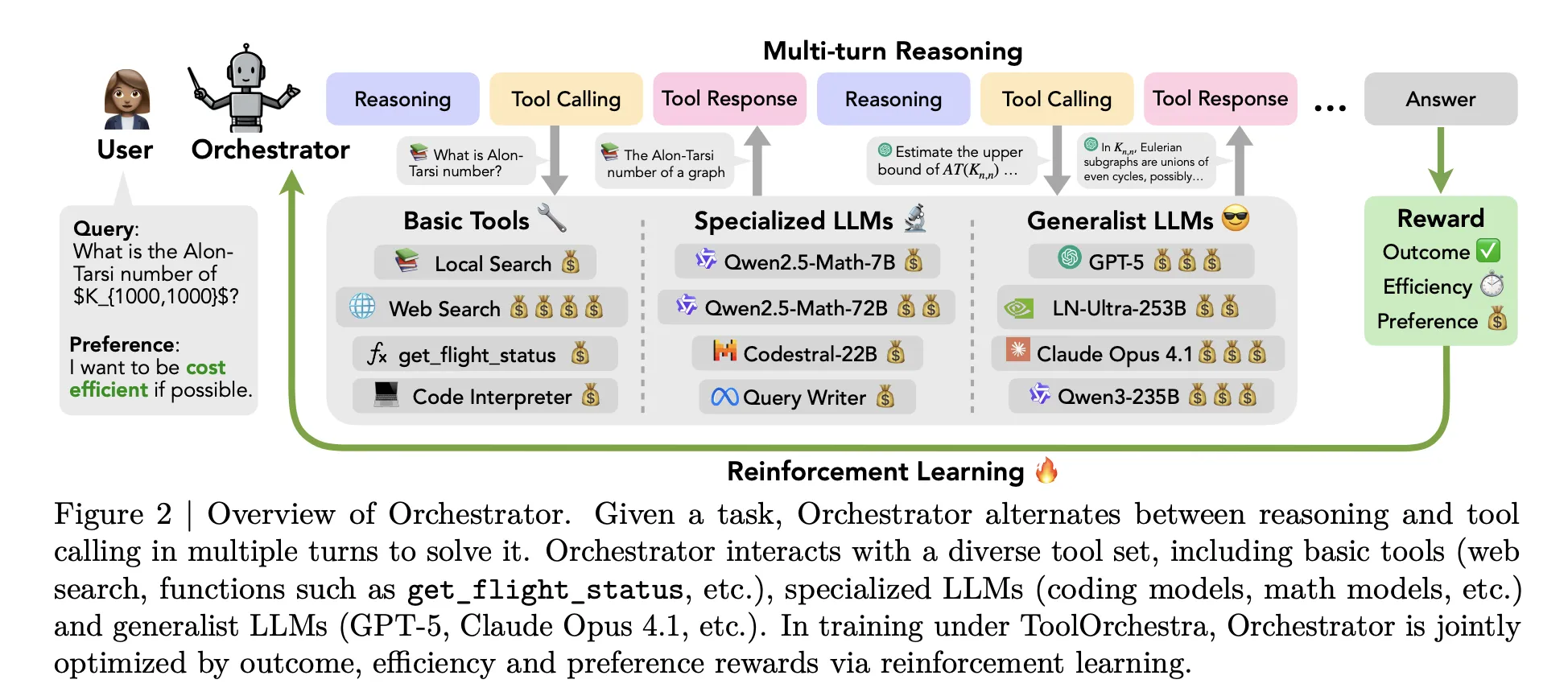

Most proxies these days follow a simple pattern. A single large model (such as GPT-5) receives a prompt describing the available tools and then decides when to invoke a web search or code interpreter. All high-level reasoning still stays within the same model. ToolOrchestra changes this setting. It trains a specialized controller model calledOrchestrator-8B‘, which treats classic tools and other LLMs as callable components.

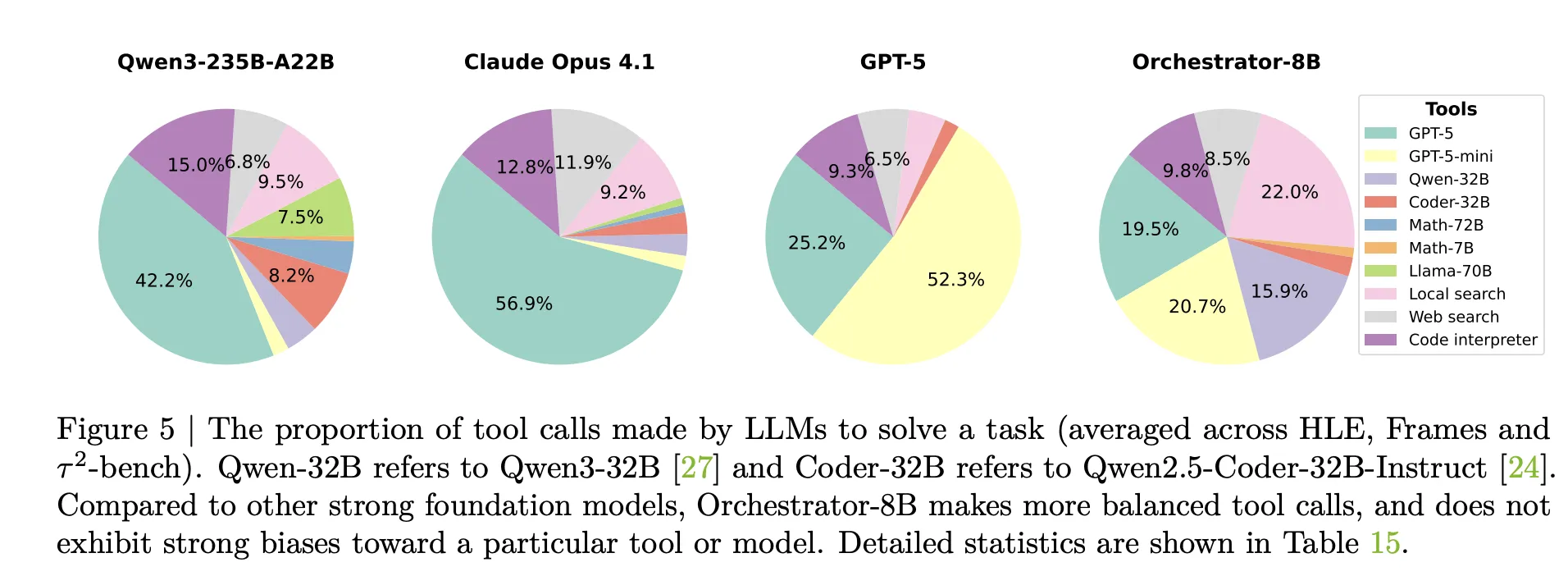

A pilot study in the same study showed why naive prompts are not enough. When Qwen3-8B is prompted to route between GPT-5, GPT-5 mini, Qwen3-32B, and Qwen2.5-Coder-32B, it delegates to GPT-5 73% of the time. When GPT-5 acts as its own coordinator, 98% of the time it calls GPT-5 or GPT-5 mini. The research team calls these self-enhancement and other-enhancement biases. The routing strategy uses a strong model and ignores cost directives.

In contrast, ToolOrchestra explicitly trains a small orchestrator for this routing problem by using reinforcement learning on complete multi-turn trajectories.

What is Orchestrator 8B?

Orchestrator-8B is a Transformer with only 8B parameter decoder. It was built by fine-tuning Qwen3-8B as an orchestration model and published on Hugging Face.

During inference, the system runs a multi-round loop, alternating inference and tool invocation. This launch includes three main steps. firstcoordinator 8B reads user instructions and optional natural language preference descriptions, such as prioritizing requests for low latency or avoiding network searches. secondwhich generates internal thinking style chains of reasoning and plans actions. thirdwhich selects a tool from the available set and issues structured tool calls in a unified JSON format. The environment performs the call, attaches the result as an observation and feeds it back to the next step. The process stops when a termination signal is generated or when the maximum 50 revolutions are reached.

tool cover three main groups. Basic tools include Tavily web search, a Python sandbox code interpreter, and a local Faiss index built with Qwen3-Embedding-8B. Professional LLMs include Qwen2.5-Math-72B, Qwen2.5-Math-7B and Qwen2.5-Coder-32B. Common LLM tools include GPT-5, GPT-5 mini, Llama 3.3-70B-Instruct and Qwen3-32B. All tools share the same schema, including names, natural language descriptions, and typed parameter specifications.

End-to-end reinforcement learning with multi-objective rewards

tool orchestra Formulate the entire workflow as a Markov decision process. This state contains conversation history, past tool calls and observations, and user preferences. Actions are the next textual step and include inference markup and tool invocation schema. After up to 50 steps, the environment calculates a scalar reward for the complete trajectory.

The rewards are three components. The resulting reward is binary, depending on whether the trajectory solves the task. For open-ended answers, use GPT-5 as a judge to compare the model output to the reference. Efficiency bonuses penalize monetary costs and wall-clock delays. Map token usage of proprietary and open source tools to monetary costs using public APIs and Together AI pricing. Preference rewards measure how well a tool’s usage matches the user’s preference vector, which can increase or decrease cost, latency, or the weight of a specific tool. Use a preference vector to combine these components into a single scalar.

The policy is optimized to Optimization of group related policies GRPO, a variant of policy gradient reinforcement learning that normalizes rewards within groups of trajectories for the same task. The training process includes filters that discard trajectories with invalid tool call formats or weak reward variance to stabilize optimization.

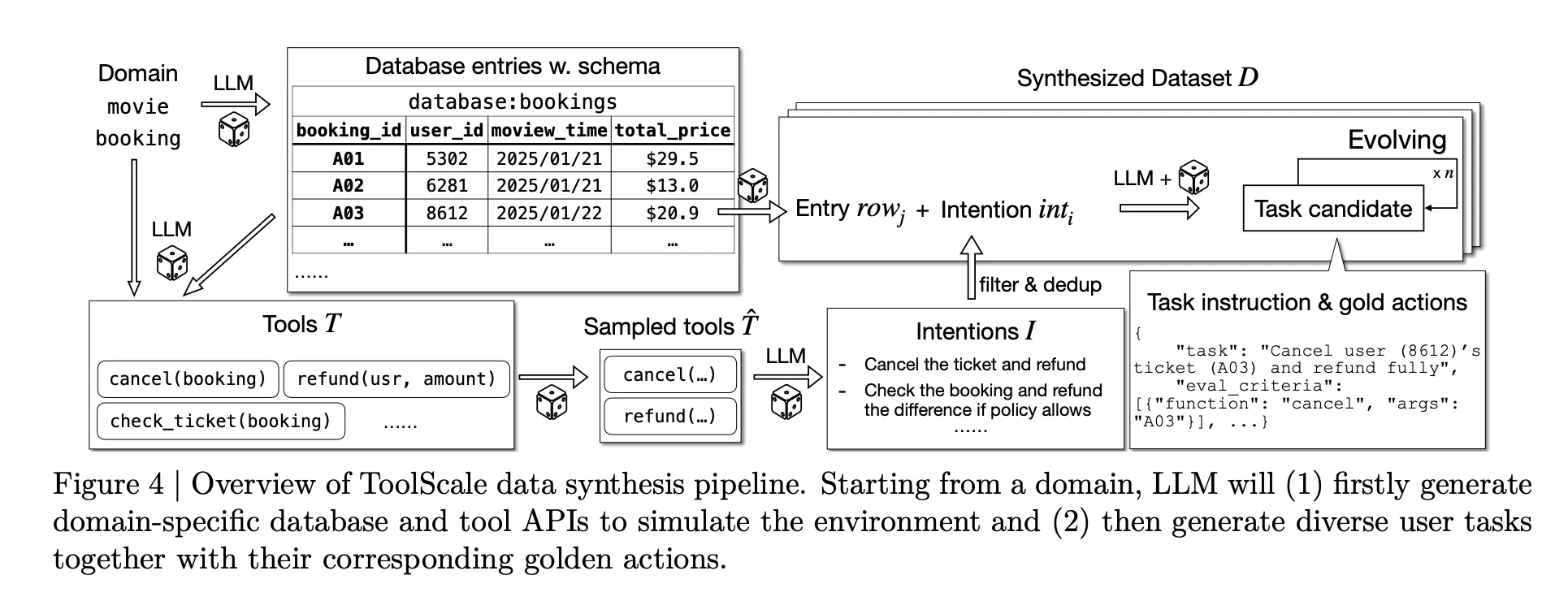

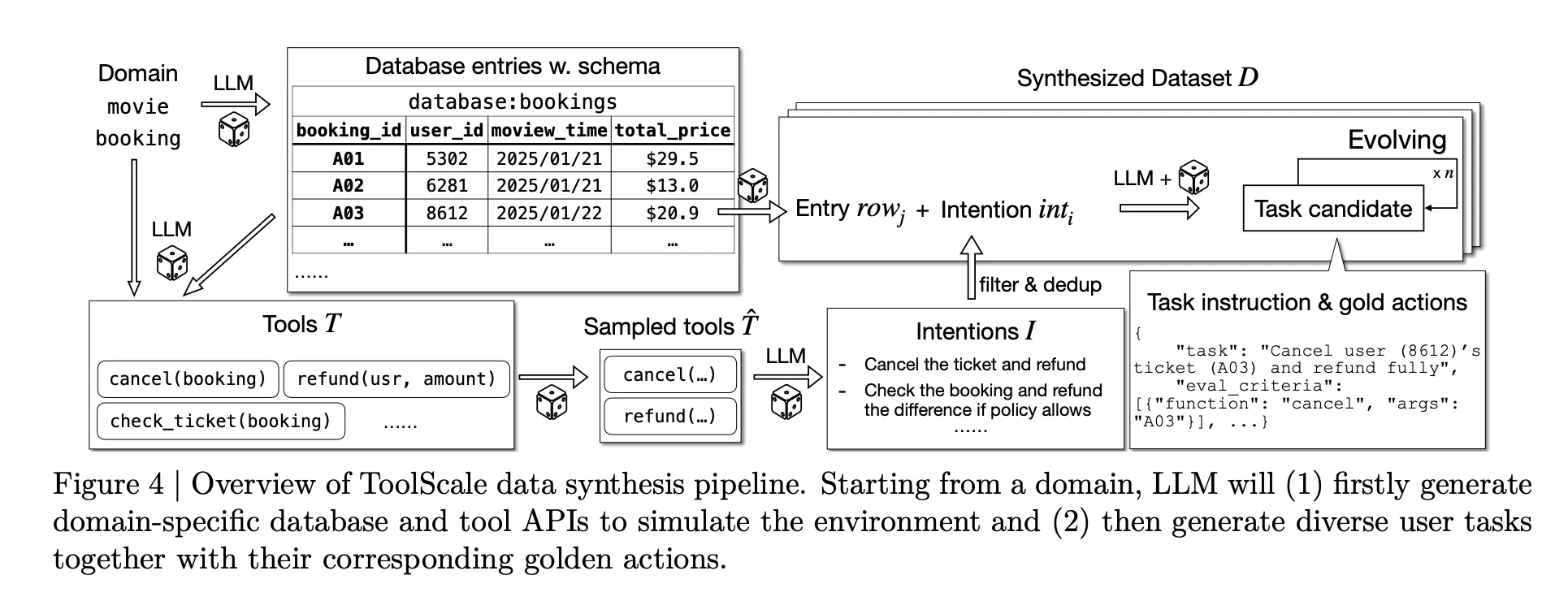

To make this training possible, Research team plans to launch ToolScalea synthetic dataset of multi-step tool invocation tasks. For each domain, the LLM generates database schemas, database entries, domain-specific APIs, and then various user tasks with a realistic sequence of function calls and required intermediate information.

Benchmark results and cost overview

NVIDIA research team evaluation Orchestrator-8B Three challenging benchmarks: Humanity’s Last Exam, FRAMES, and τ² Bench. These benchmarks target long-term reasoning, factuality under retrieval, and function calling in a dual-control environment.

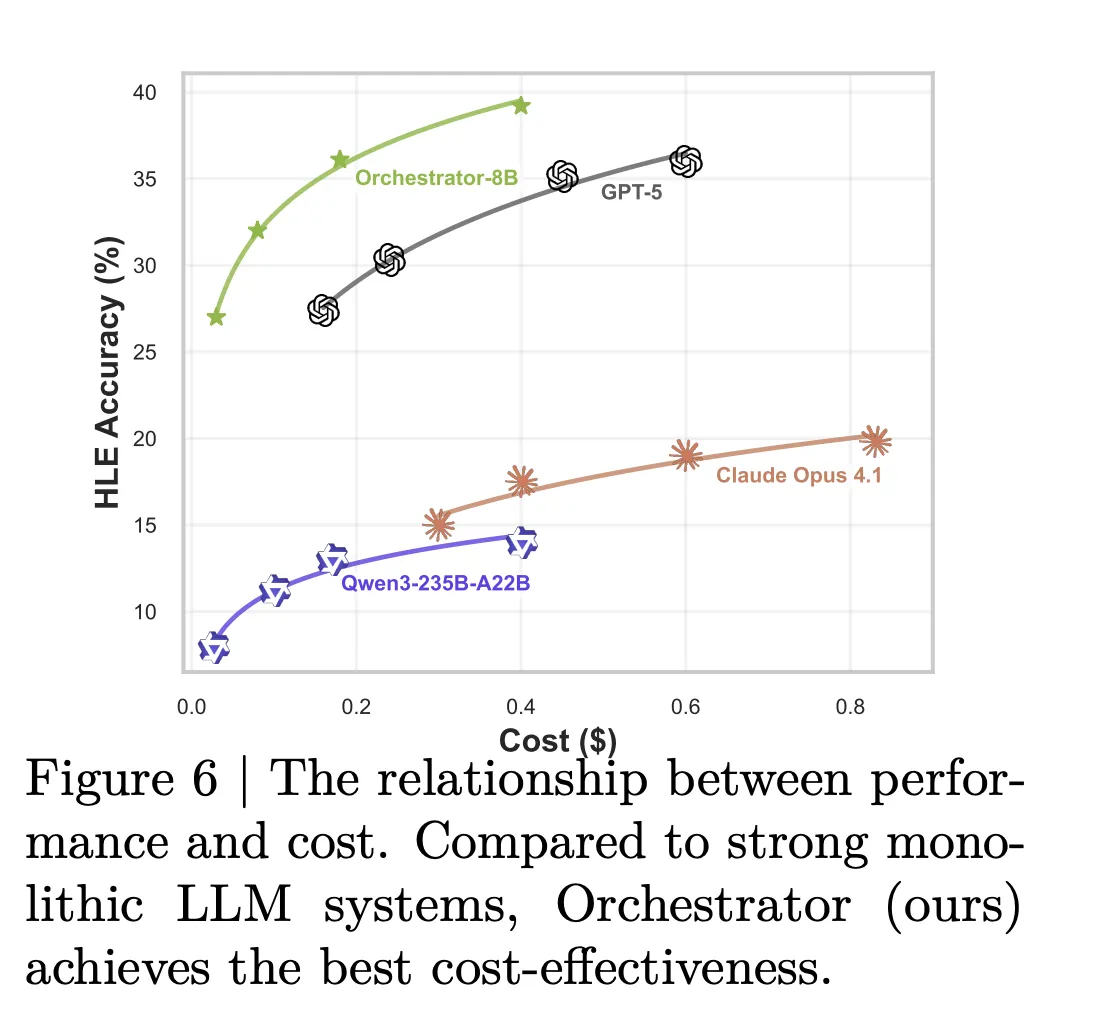

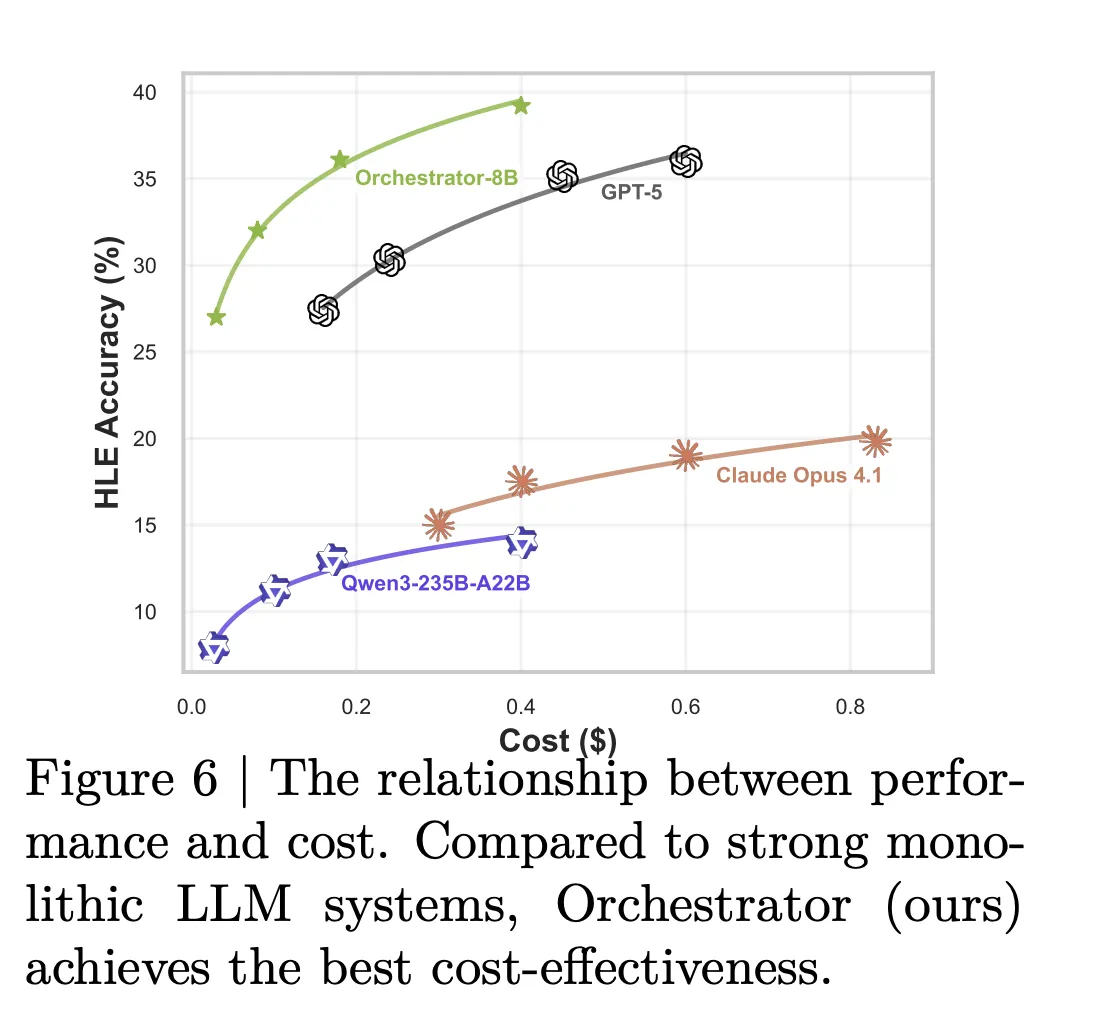

On Humanity’s final exam text-only questions, Orchestrator-8B achieved an accuracy of 37.1%. GPT-5 using basic tools achieved 35.1% with the same settings. On FRAMES, Orchestrator-8B achieved 76.3%, while GPT-5 using the tool achieved 74.0%. On τ² Bench, Orchestrator-8B scored 80.2%, while GPT-5 using basic tools scored 77.7%.

The efficiency gap is large. In a configuration using the basic tools plus specialized and general purpose LLM tools, Orchestrator-8B had an average cost of 9.2 cents and a latency of 8.2 minutes per query (averaged across Humanity’s Last Exam and FRAMES). With the same configuration, GPT-5 costs 30.2 cents and takes 19.8 minutes on average. The model card concluded that compared to GPT-5, Orchestrator-8B has a monetary cost of about 30% and is 2.5 times faster.

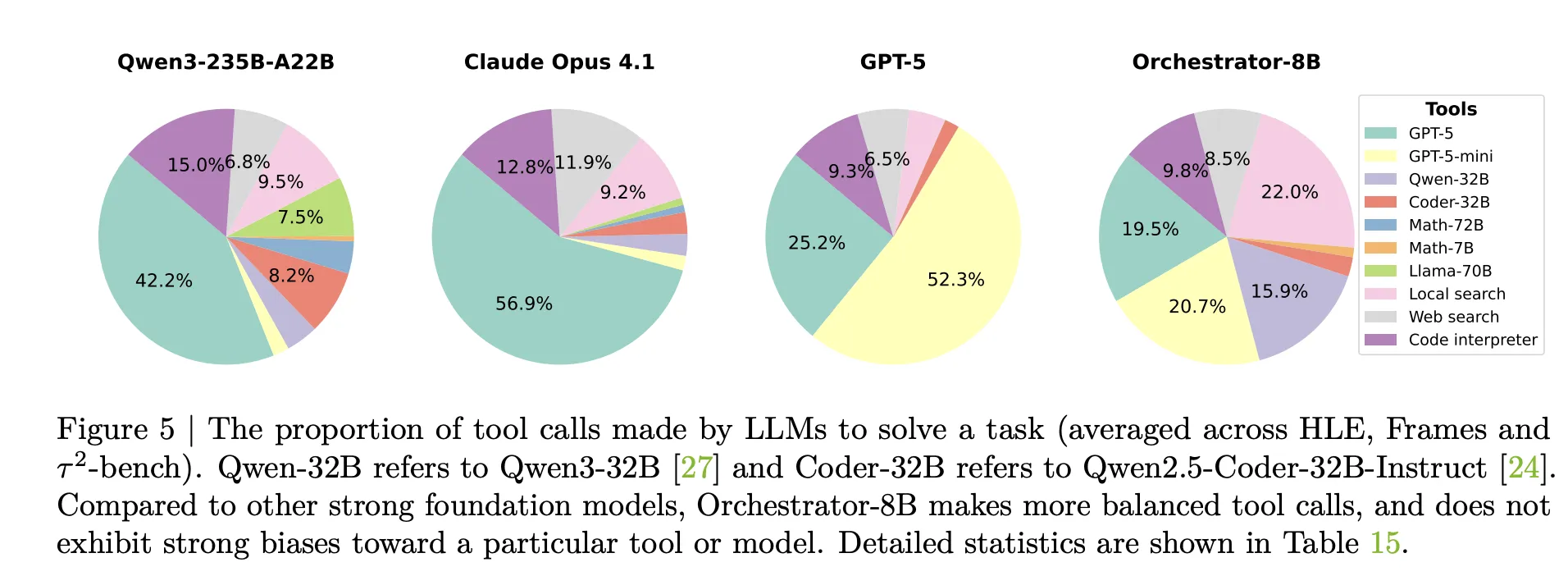

Analysis of tool use supports this notion. Claude Opus 4.1 used as coordinator calls GPT-5 most of the time. GPT-5 used as coordinator prefers GPT-5 mini. Orchestrator-8B distributes calls more evenly across powerful models, cheaper models, search, local retrieval, and code interpreters, and achieves higher accuracy at lower cost for the same round budget.

Generalization experiments replace training-time tools with unseen models, such as OpenMath Llama-2-70B, DeepSeek-Math-7B-Instruct, Codestral-22B-v0.1, Claude Sonnet-4.1, and Gemma-3-27B. Orchestrator-8B still achieves the best trade-off between accuracy, cost, and latency among all benchmarks in this setting. A separate preference-aware test set shows that Orchestrator-8B also tracks user tool usage preferences more closely than GPT-5, Claude Opus-4.1, and Qwen3-235B-A22B under the same reward metric.

Main points

- ToolOrchestra trains the 8B parameter orchestration model Orchestrator-8B, which selects and orders tools and LLMs to solve multi-step agent tasks using reinforcement learning with outcome, efficiency, and preference-aware rewards.

- Orchestrator-8B is released as an open weight model on Hugging Face. It aims to harmonize various tools such as web search, code execution, retrieval and professional LLM through a unified model.

- In Humanity’s Last Exam, Orchestrator-8B’s accuracy reached 37.1%, exceeding GPT-5’s 35.1%, while improving efficiency by about 2.5 times; on τ² Bench and FRAMES, its performance was better than GPT-5, while the cost was only about 30% of GPT-5.

- The framework shows that the naive prompting of a cutting-edge LL.M. as its own router can lead to self-reinforcing biases, overusing itself or a small set of powerful models, while a well-trained orchestrator learns a more balanced, cost-aware routing strategy through multiple tools.

Editor’s Note

NVIDIA’s ToolOrchestra is a practical step toward composite AI systems, where the 8B orchestration model Orchestrator-8B learns explicit routing policies through tools and LLM, rather than relying on a single leading model. It shows clear improvements over Humanity’s Last Exam, FRAMES, and τ² Bench, with ~30% cost reduction and ~2.5x efficiency improvement over GPT-5-based baselines, making it directly relevant to teams concerned with accuracy, latency, and budget. This release makes orchestration strategies a first-class optimization target in artificial intelligence systems.

Check Papers, Repos, Project Pages and Model weight. Please feel free to check out our GitHub page for tutorials, code, and notebooks. In addition, welcome to follow us twitter And don’t forget to join our 100k+ ML SubReddit and subscribe our newsletter. wait! Are you using Telegram? Now you can also join us via telegram.

Asif Razzaq is the CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of artificial intelligence for the benefit of society. His most recent endeavor is the launch of Marktechpost, an artificial intelligence media platform that stands out for its in-depth coverage of machine learning and deep learning news that is technically sound and easy to understand for a broad audience. The platform has more than 2 million monthly views, which shows that it is very popular among viewers.

🙌 FOLLOW MARKTECHPOST: Add us as your go-to source on Google.