Microsoft AI Lab unveils Mai-Voice-1 and Mai-1-preiview: a new internal model of voice AI

Microsoft AI Lab officially launched mai-voice-1 and MAI-1 reviewmarking a new stage in the company’s artificial intelligence research and development work. The announcement explains how Microsoft AI Labs participates in AI research without any third party participation. mai-voice-1 and MAI-1 review The model supports a unique but complementary role in pronunciation and common language understanding.

Mai-Voice-1: Technical details and features

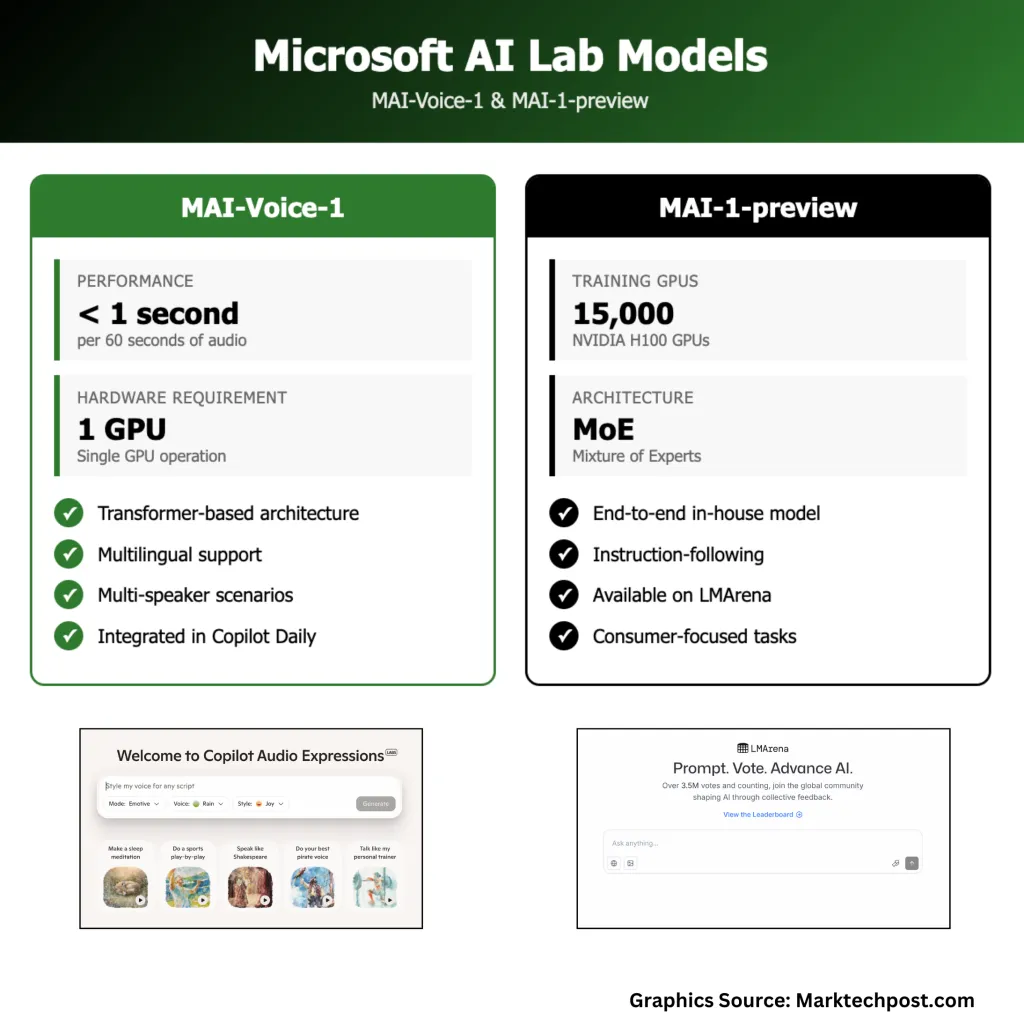

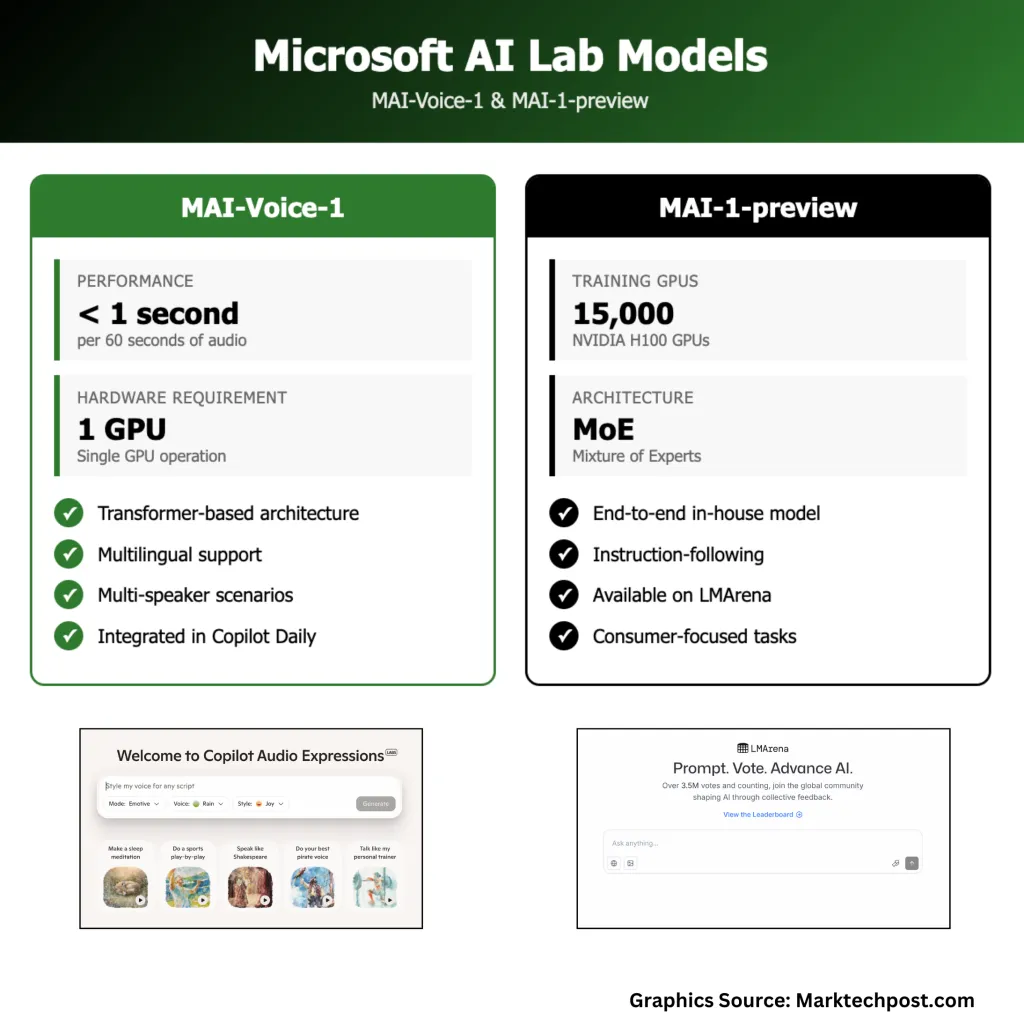

Mai-Voice-1 is a speech generation model that produces high-fidelity audio. It generates a minute of natural audio in one second using a single GPU in one second, and supports applications such as interactive assistants and podcasts along with low latency and hardware requirements. Try it here

The model uses a transformer-based architecture that trains a wide variety of speech datasets. It handles single and multi-speaker schemes, providing expressive and context-appropriate voice output.

Mai-Voice-1 has been integrated into Microsoft products such as Copilot Daily for voice updates and news digests. It can be used to test in a side lab where users can create audio stories or guide narratives from text prompts.

Technically, the model focuses on quality, versatility, and speed. Its single GPU operation is different from systems that require multiple GPUs, integrated in consumer devices and cloud applications outside of research settings

MAI-1-preiview: Basic model architecture and performance

MAI-1 review It is Microsoft’s first end-to-end internal basic language model. Unlike previous models that Microsoft integrates or licenses from outside, MAI-1-preiview uses Microsoft’s own infrastructure entirely, using Experts Architecture and approximately 15,000 NVIDIA H100 GPU training.

The Microsoft AI team conducted a MAI-1-preview on the LMARENA platform, which placed it next to several other models. MAI-1-preiview is optimized for follow-up guidance and daily conversation tasks, making it suitable for consumer-centric applications rather than enterprise or highly professional use cases. Microsoft has begun accessing selected text-based solutions within Copilot, and has gradually expanded the collection of feedback and refined the system.

Model development and training infrastructure

Microsoft’s next-generation GB200 GPU cluster supports the development of MAI-VOICE-1 and MAI-1-PREVIEW, a customized infrastructure specifically designed for training large generative models. In addition to hardware, Microsoft has invested a lot of money, invested a lot of money in talent, and has deep expertise in generating AI, voice synthesis and large-scale system engineering. The company’s modeling and development approach emphasizes the balance between basic research and actual deployment, aiming to create theoretically impressive systems that are reliable and useful in everyday situations.

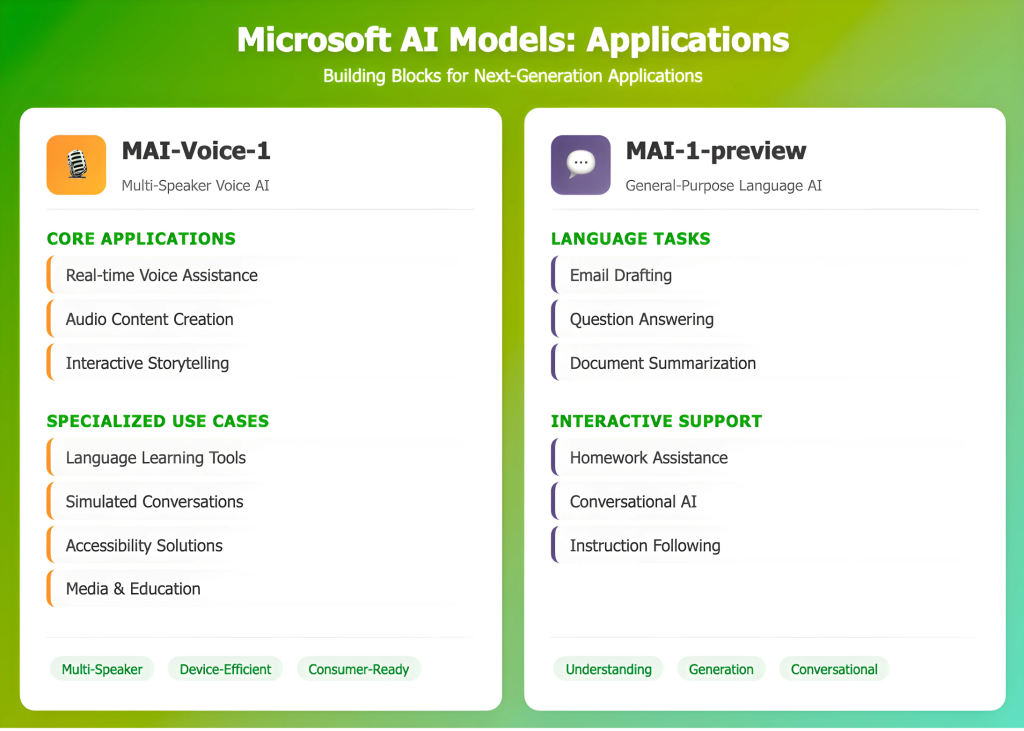

Apply

Mai-Voice-1 can be used for real-time voice help, audio content creation or accessibility features in media and education. Its ability to simulate multiple speakers supports use in interactive scenarios such as storytelling, language learning, or simulated conversations. The efficiency of this model also allows deployment on consumer hardware.

MAI-1-preiview focuses on general language understanding and generation, assisting tasks such as drafting emails, answering questions, summarizing texts, or helping to understand and assist school tasks in the form of conversations.

in conclusion

Microsoft released MAI-VOICE-1 and MAI-1-PREVIEW to show that the company can now develop core-generated AI models internally and make substantial investments in training infrastructure and technical talents. Both models are used for practical, real-world use and refined through user feedback. This development increases the diversity of model architectures and training methods in the field, with a focus on efficient, reliable and suitable systems integrated into everyday applications. Microsoft’s approach (using large-scale resources, step-by-step deployment, and direct involvement with users) is an example of how organizations can improve AI capabilities while emphasizing practical, incremental improvements.

Check Technical details are here. Check out ours anytime Tutorials, codes and notebooks for github pages. Also, please stay tuned for us twitter And don’t forget to join us 100K+ ml reddit And subscribe Our newsletter.

Asif Razzaq is CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, ASIF is committed to harnessing the potential of artificial intelligence to achieve social benefits. His recent effort is to launch Marktechpost, an artificial intelligence media platform that has an in-depth coverage of machine learning and deep learning news that can sound both technically, both through technical voices and be understood by a wide audience. The platform has over 2 million views per month, demonstrating its popularity among its audience.