Blocking and tokenization: Key differences in AI text processing

introduce

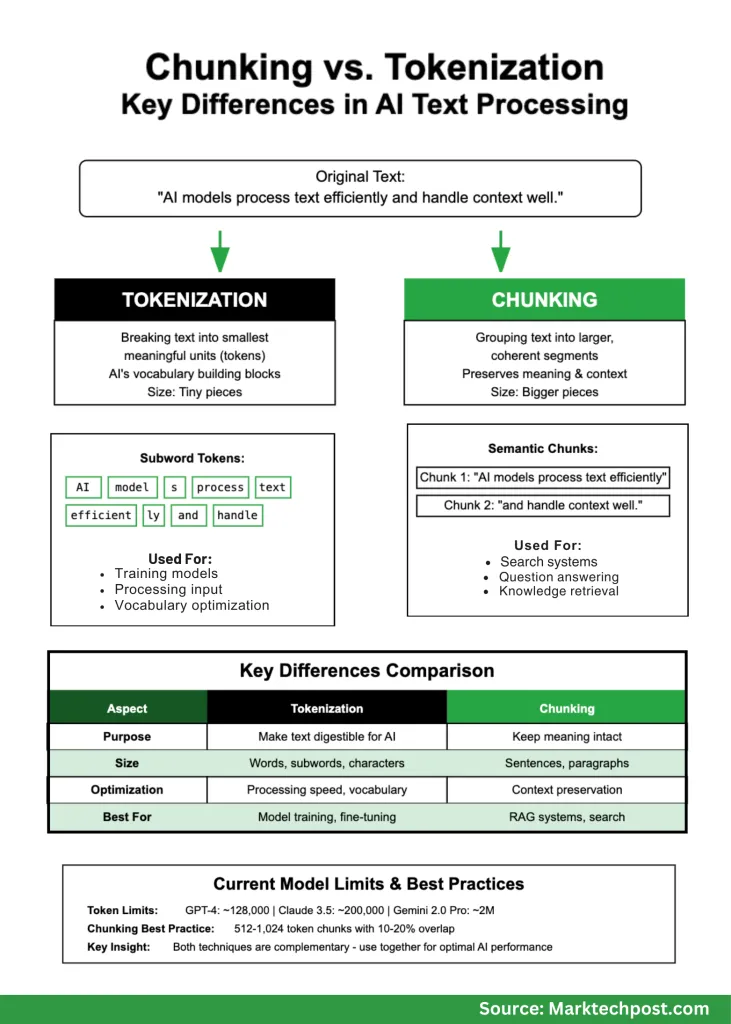

When you use AI and natural language processing, you will quickly encounter two basic concepts that are often confused: tokenization and blocking. Although both involve breaking the text into smaller works, they are intended for completely different purposes and work on different scales. If you are building an AI application, understanding these differences is more than just academic differences, which is crucial to creating systems that actually work well.

Think of this: If you are making a sandwich, tokenization is like cutting ingredients into bite-sized pieces, and small pieces are like organizing those pieces into meaningful logical groups that these groups can eat together. Both are necessary, but they solve different problems.

What is tokenization?

Tokenization is the process of breaking text into minimally meaningful units that an AI model can understand. These units, called tokens, are fundamental components that can be used by the language model. You can think of tokens as “words” in AI vocabulary, although they are usually smaller than the actual words.

There are several ways to create a token:

Word-level tokenization Split text on space and punctuation. It’s simple, but creates problems with rare words that have never been seen in the model.

Subword tokenization Today it is more complex and widely used. Methods such as byte pair encoding (BPE), methods of text and sentences appear in the training data based on the frequency of character combinations. This approach can better handle new or rare words.

Character-level tokenization Treat each letter as a token. It’s simple, but creates very long sequences that are difficult to handle the model efficiently.

Here is a practical example:

- original: “AI model processes text efficiently.”

- Word Token: [“AI”, “models”, “process”, “text”, “efficiently”]

- Zizi Token: [“AI”, “model”, “s”, “process”, “text”, “efficient”, “ly”]

Note how the subword token divides “model” into “model” and “S”, because this pattern often occurs in training data. This helps the model understand related words, such as “modeling” or “modeling”, even if it has never seen them before.

What is a block?

Blocking takes a completely different approach. Instead of dividing the text into small pieces, it divides the text into larger, coherent fragments to preserve meaning and context. When you build applications like chatbots or search systems, you need these larger blocks to maintain the flow of your idea.

Consider reading a research paper. You don’t want each sentence to be randomly scattered – you want to group relevant sentences together so that these ideas make sense. This is exactly what Bunking does to AI systems.

Here is how it works in practice:

- original: “AI models process text efficiently. They rely on tokens to capture meaning and context. Blocks are better retrieved.”

- Block 1: “AI model processes text efficiently.”

- Block 2: “They rely on tokens to capture meaning and context.”

- Block 3: “Blocks are better retrieved.”

Modern block strategies have become very complex:

Fixed length block Create blocks of specific sizes (such as 500 words or 1000 characters). This is predictable, but sometimes awkwardly breaks down related ideas.

Semantic blocks Smarter – It looks for natural breakpoints in which topics change, leveraging AI to understand when to move from one concept to another.

Recursive blocks Working on a hierarchy, first try to break in paragraphs, then split in sentences, and then smaller units when needed.

Sliding window block Create overlapping blocks to ensure that important context is not lost on the boundary.

Important key differences

Understanding when using each method will make your AI application different:

| What are you doing | Tokenization | Partition |

|---|---|---|

| size | Small pieces (words, part of the word) | Bigger fragments (sentences, paragraphs) |

| Target | Enable the text digestion of AI models | The meaning of keeping humans and artificial intelligence |

| When you use it | Training the model, processing input | Search system, Q&A |

| Your optimized | Processing speed, vocabulary size | Context save, search accuracy |

Why is this important for practical applications

Used for AI model performance

When you use a language model, tokenization directly affects how much you pay and how quickly the system runs. Models like GPT-4 are collected by tokens, and such efficient tokenization can save money. The current model has different limitations:

- GPT-4: Approximately 128,000 tokens

- Claude 3.5: Up to 200,000 tokens

- Gemini 2.0 Pro: Up to 2 million tokens

Recent research shows that larger models can actually work better on larger vocabulary effects. For example, while the Llama-2 70b uses about 32,000 different tokens, it may perform better, compared to about 216,000. This is important because the correct vocabulary size can affect performance and efficiency.

Used for search and questioning systems

Blocking strategies can create or destroy your rag (retrieve enhanced generation) system. If your block is too small, you will lose the background. Too big, you will overwhelm the model with insignificant information. Get it correctly and your system provides accurate and useful answers. Get it wrong and you will get hallucinations and bad results.

Companies that build enterprise AI systems have found that smart block strategies can greatly reduce frustrating cases where AI constitutes facts or gives ridiculous answers.

Where will you use each method

Tokenization for:

Training new models – If the training data is not trained first, the language model cannot be trained. Tokenization strategies affect everything about the level of learning of the model.

Fine-tune existing models – When you adjust a pretrained model for a specific field (such as medical or legal text), you need to carefully consider whether existing tokenization is suitable for your professional vocabulary.

Cross-language application – Subword tokenization is especially useful when using languages with complex word structures or building multilingual systems.

Block pair:

Establish a company knowledge base – When you want employees to ask questions and get accurate answers from internal documents, the appropriate block ensures that the AI retrieves relevant, complete information.

Planning Document Analysis – Whether you are dealing with legal contracts, research papers or customer feedback, chunking helps maintain the structure and meaning of the document.

Search system – Modern search goes beyond keyword matching. Semantic decomposition helps the system understand what the user really wants and retrieve the most relevant information.

Current best practices (actually effective)

After watching many real-world implementations, here are the ways that tend to work:

For chunking:

- Start with the token block of 512-1024

- Add 10-20% overlap between blocks to save the environment

- Use semantic boundaries where possible (end of sentence, paragraph)

- Test with your actual use case and adjust based on the results

- Monitor hallucinations and adjust your approach accordingly

For tokenization:

- Use established methods (BPE, WordPiece, sentences), instead of building your own

- Consider your field – Media or legal texts may require a special approach

- Monitor the rate of insufficient production

- Balance between compression (fewer tokens) and meaning saving

Summary

Tokenization and decomposition are not competitive technologies, they are complementary tools to solve different problems. Tokenization makes the text of the AI model diggable while providing blocky meanings for practical applications.

As AI systems become more complex, both technologies are constantly evolving. The context window is getting bigger, the vocabulary is getting higher and higher, and it is getting smarter in maintaining semantic meanings.

The key is to understand what you want to accomplish. Create a chatbot? Focus on blocking strategies that retain conversational environments. Training the model? Optimize your tokenization for efficiency and coverage. Establish an enterprise search system? You will need both – smart tokenization to improve efficiency and accuracy of smart blocks.

Michal Sutter is a data science professional with a master’s degree in data science from the University of Padua. With a solid foundation in statistical analysis, machine learning, and data engineering, Michal excels in transforming complex datasets into actionable insights.