Alibaba QWEN team releases mobile agents-V3 and GUI-OWL: Next-generation multi-agent framework for GUI automation

Introduction: The rise of GUI agents

Modern computing is dominated by graphical user interfaces across devices (Mobile, Desktop and Web). Traditionally, automated tasks in these environments are limited to scripted macros or fragile manual design rules. Recent advances in visual models provide tempting possibilities for understanding screens, reasons for tasks and agents who perform actions, just like humans. However, most methods either rely on closed source, black box models, or work hard to make ease of reasoning, reasoning fidelity, and cross-platform robustness.

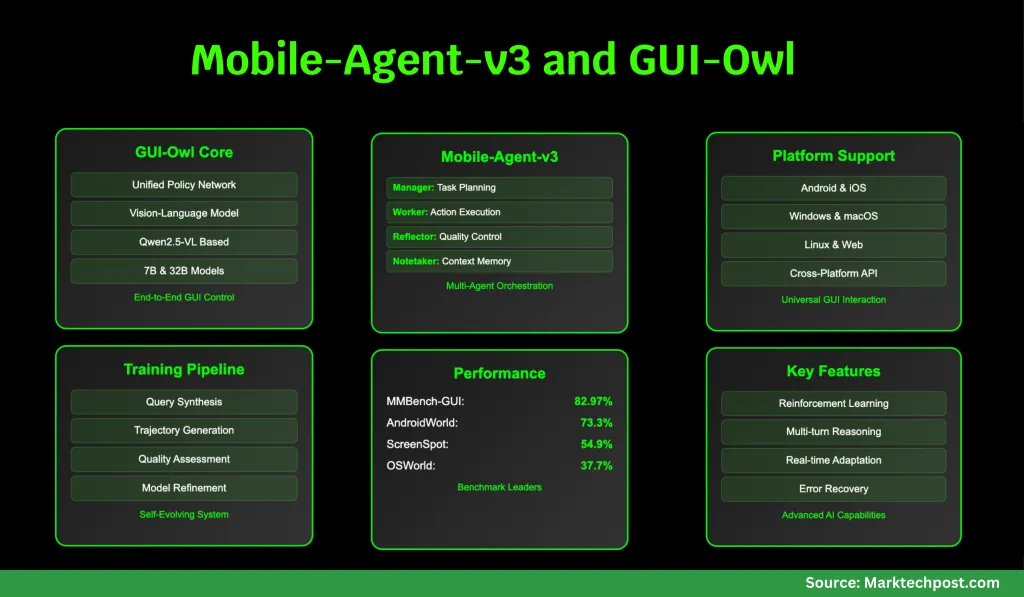

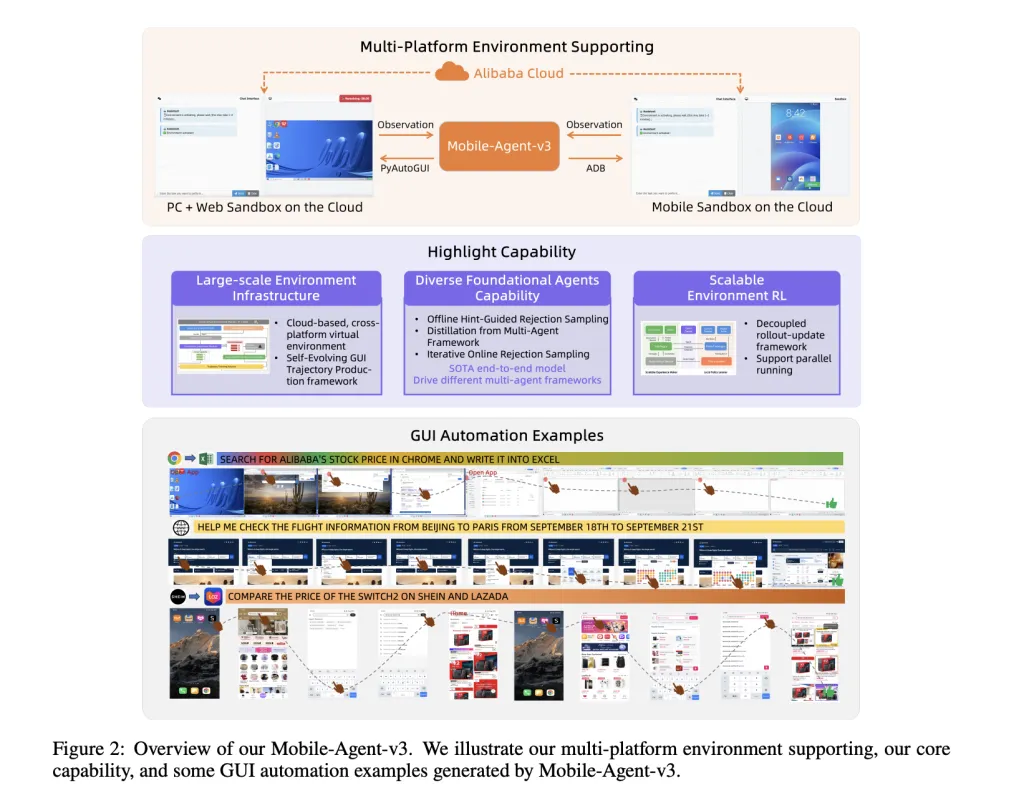

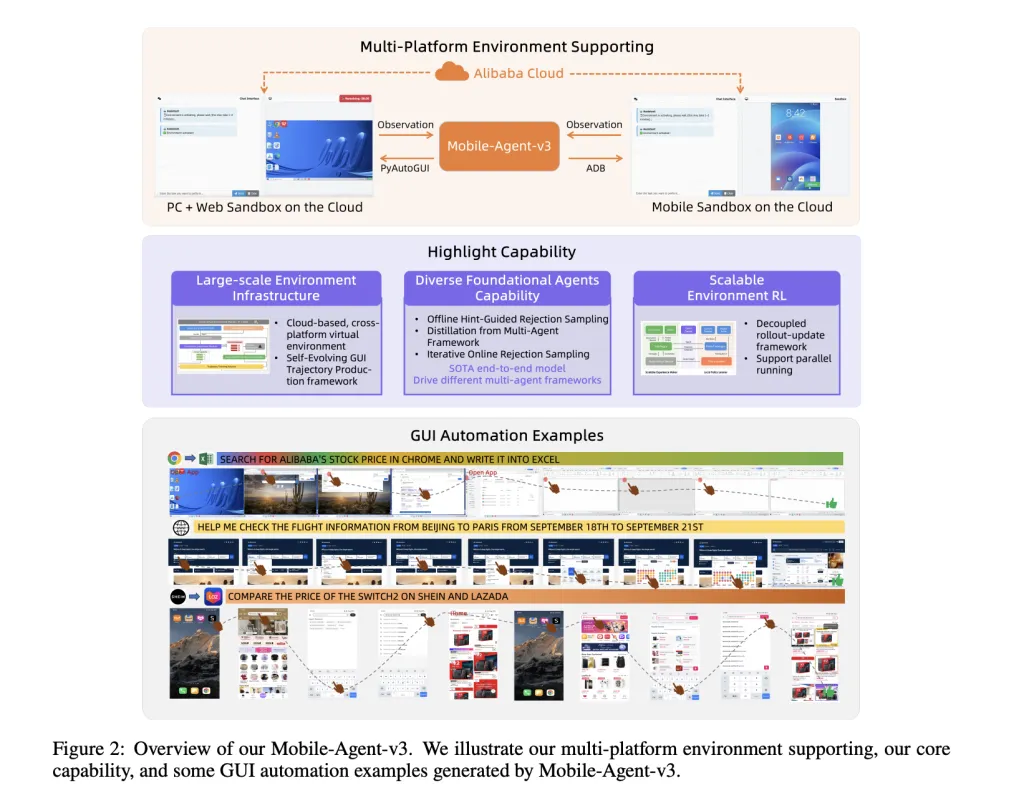

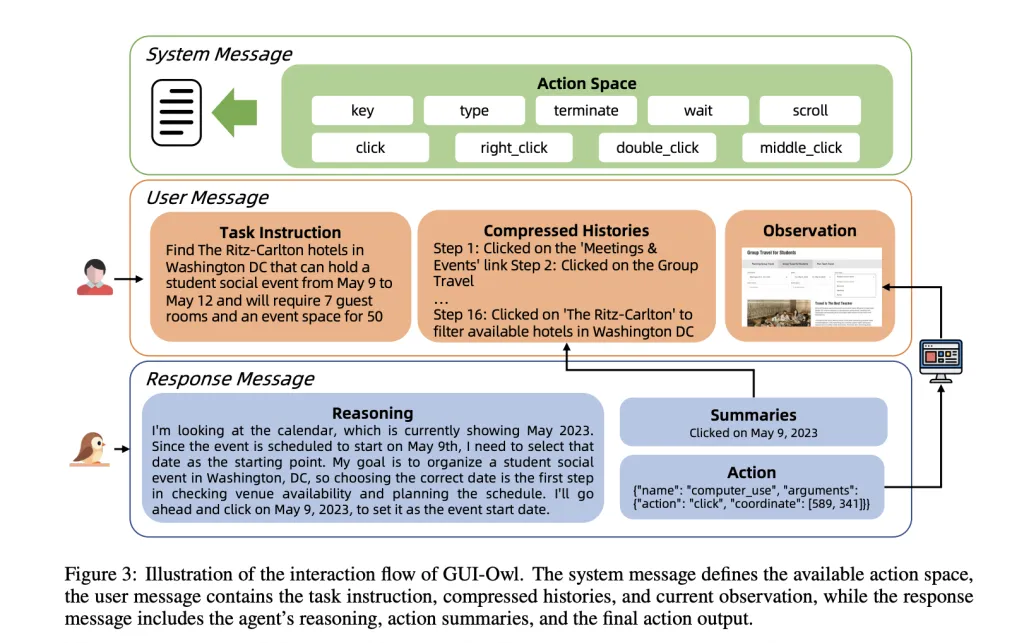

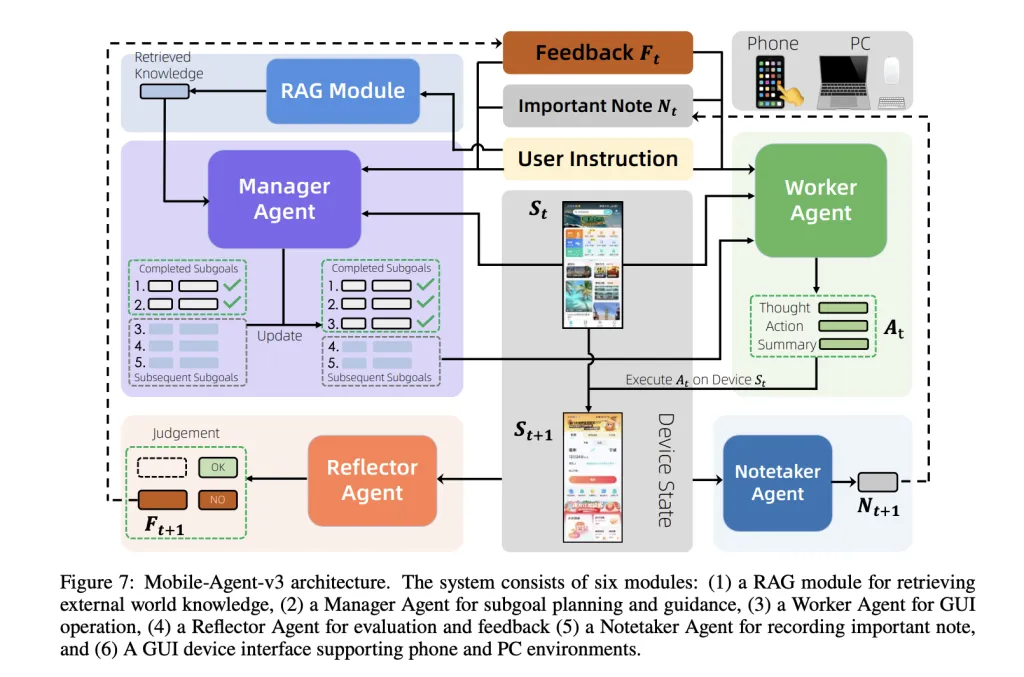

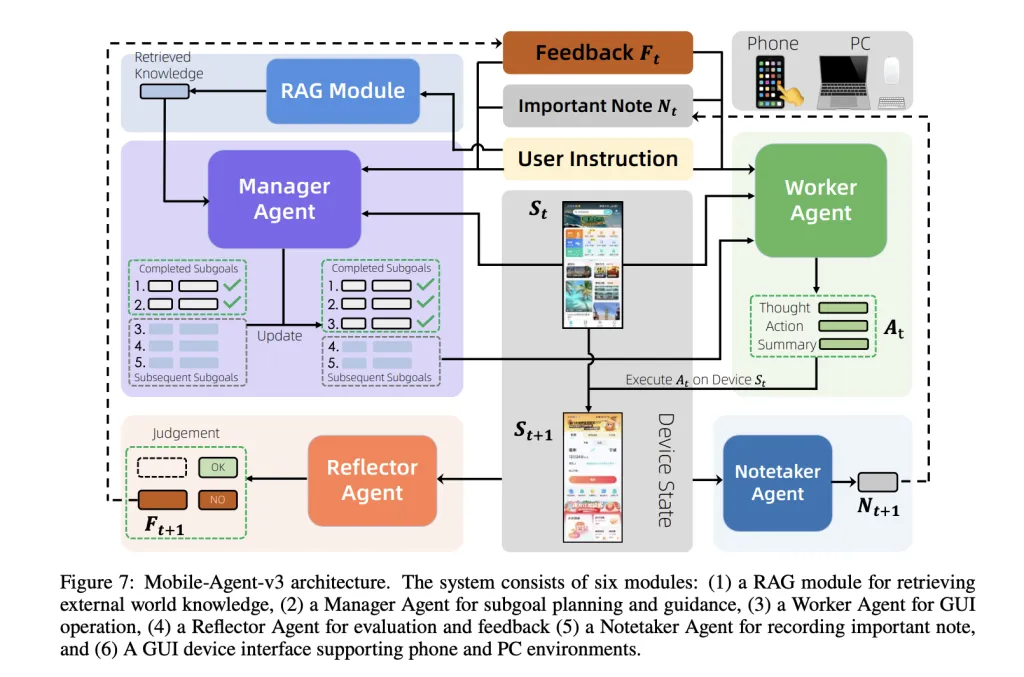

A team of researchers from Alibaba Qwen introduced Gui-Owl and Mobile Agent-V3 These challenges are positive. Gui-Owl is a native, end-to-end multi-modal model based on QWEN2.5-VL and is extensively trained on large-scale, diverse GUI interaction data. It unifies perception, grounding, reasoning, planning and action execution in a single policy network, enabling robust cross-platform interaction and clear multi-transformation reasoning. Mobile Agent – V3 framework uses Gui-Owl as the basic module to carefully plan multiple professional agents (managers, workers, reflectors, NoteTaker) to handle complex, long-distance tasks with dynamic planning, reflections and memory.

Architecture and core functions

Gui-Owl: Basic Model

Gui-Owl is designed from scratch to handle the heterogeneity and vitality of the real world GUI environment. It is initialized from Qwen2.5-VL, a state-of-the-art visual model, but provides extensive additional training on dedicated GUI datasets. This includes Grounding (Find UI elements from natural language query), Mission plan (Break the complex instructions into feasible steps), and Action Semantics (Understanding how actions affect GUI status). The model is fine-tuned through a mix of supervised learning and reinforcement learning (RL), with the focus on aligning its decisions with real-world tasks success.

Gui-Owl’s main innovations:

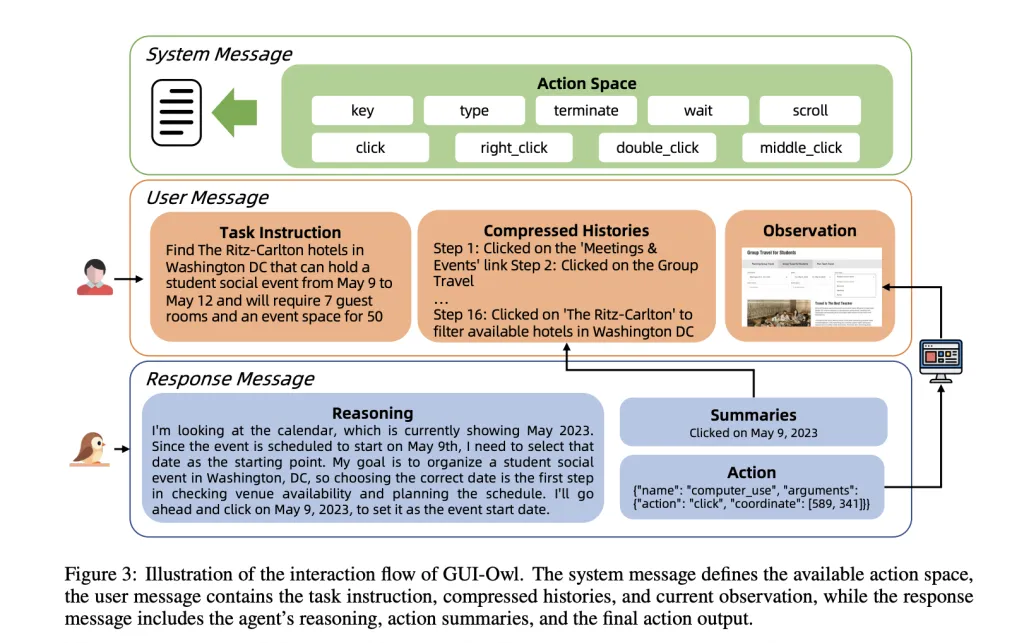

- Unified Policy Network: Unlike previous studies that split perception, planning, and execution into disjoint modules, GUI-OWL integrates these features into a single neural network. This allows seamless multi-turn decisions and clear intermediate reasoning – critical to dealing with ambiguity and variability in real GUIs.

- Scalable training infrastructure: The team built a cloud-based virtual environment that spans Android, Ubuntu, MacOS, and Windows. This “self-developed GUI trajectory production” pipeline generates high-quality interactive data by allowing GUI-OWL and mobile agent-V3 to interact with virtual devices, and then strictly judges the correctness of the trajectory. The successful trajectory is used for further training, creating a virtuous cycle of improvement.

- Comprehensive data of various types: To teach the model’s strong foundation and reasoning, the research team adopted a variety of data synthesis strategies: integrating UI element grounding tasks from accessibility trees and crawling screenshots, extracting task planning knowledge from historical trajectories and large preprocessed LLMs and large screenshots, and forming the effects of the action by making the action model through predicted action effects.

- Reinforcement learning alignment: GUI-OWL is further improved through a scalable RL framework that supports fully asynchronous training and novel “trajectory-aware relative policy optimization” (TRPO). TRPO allocates credit over long length action sequences, a key advancement in GUI tasks where rewards are small and available only after the task is completed.

Mobile Agent-V3: Multi-Agent Coordination

Mobile Agent – V3 is a universal proxy framework designed to solve complex, multi-step and cross-application workflows. It breaks down tasks into sub-targets, updates plans dynamically based on execution feedback, and maintains persistent context memory. Framework coordination Four special agents:

- Manager Agent: Decompose advanced instructions into sub-targets and update plans dynamically based on results and feedback.

- Worker Agent: In view of the current GUI status, prior feedback and cumulative descriptions, the most relevant feasible sub-objectives are performed.

- Reflector Agent: Evaluate the results of each action, comparing expected and actual state transitions to generate diagnostic feedback.

- Notetaker proxy: Continuous critical information (e.g., code, credentials) across application boundaries, implements long-distance tasks.

Training and data pipelines

The main bottleneck in GUI agent development is the lack of high-quality scalable training data. Traditional approaches rely on expensive manual annotations, which does not extend to the diversity and vitality of the real GUI. Gui-owl team passed Self-evolving data production pipeline:

- Query generation: For mobile applications, a directed acyclic graph (DAG) model for user input realistic navigation streams and slot value pairs. LLMS integrates natural instructions from these paths, which can be further improved and verified for practical application interfaces.

- Trajectory generation: Given a query, GUI-OWL or mobile proxy-V3 interacts with the virtual environment to produce a trajectory, which is a series of actions and state transitions.

- Trajectory correctness judgment: A two-level critic system evaluates each step (did the action have the expected effect?) and the overall trajectory (did the task succeed?). This uses both text and multi-modal reasoning and has a consensus-based final judgment.

- Guidance Comprehensive: For challenging queries, the system combines step-by-step guidance on successful (human or model) trajectory, helping agents learn from positive examples.

- Iterative training: The newly generated success trajectory is added to the training set and the model is retrained, ending the self-improvement cycle.

Benchmarks and performance

GUI-OWL and mobile agent V3 are rigorously evaluated in a set of GUI automation benchmarks, covering basic, single-step decision making, Q&A and end-to-end task completion.

Grounding and UI understanding

About Grounding Tasks – List UI elements from natural language query –GUI-OWL-7B outperforms all fairly sized open source models, while GUI-OWL-32B even outperforms the proprietary models of GPT-4O and Claude 3.7. For example, mmbench-gui L2 Benchmarks (covering Windows, MacOS, Linux, iOS, Android and the Web), GUI-OWL-7B scored 80.49, while GUI-OWL-32B reached 82.97, all leading the game. exist ScreenPot Profocusing on high resolution, complex interface, GUI-OWL-7B scored 54.9, significantly better than UI-TARS-72B and QWEN2.5-VL-72B. These results show that Gui-Owl’s grounding function is both wide and deep, ranging from simple button clicks to fine-grained text localization.

Comprehensive GUI understanding and single-step decision making

mmbench-gui L1 Ask questions to evaluate UI understanding and single-step decision making. Here, the GUI-OWL-7B scores 84.5 (easy), 86.9 (medium), and 90.9 (hard)far exceeds all existing models. This not only shows accurate perception, but also strong reasoning for interface state and actions. exist Android ControlThe focus is on single-step decision making in a pre-channel environment, Gui-Owl-7b reaches 72.8, the highest among the 7B models, while Gui-Owl-32B reaches 76.6, even surpassing the largest open and proprietary models.

End-to-end and multi-proxy capabilities

Real testing of GUI agents is its ability to complete real multi-step tasks in an interactive environment. Androidworld and OSWORLD Are two such benchmarks, and the agent must automatically browse the application and operating system to complete user instructions. gui-owl-7b scored 66.4 on Androidworld and 34.9 on OSWORLD Mobile Agent-V3 (Central Gui-Owl) Achievements 73.3 and 37.7are the new and most advanced open source frameworks. Because reflectors and manager agents can enable dynamic reconstruction and recovery from errors, multi-agent designs are particularly effective in long-distance, error-prone tasks.

Real-world integration

The research team also evaluated Gui-Owl’s performance in the established proxy framework Mobile Agent-E (Android) and Agent-S2 (desktop). Here, GUI-OWL-32B has achieved 62.1% success on Androidworld and 48.4% success on a challenging OSWorld subset, which is significantly better than all baselines. This emphasizes the actual value of Gui-Owl as a plug-in module for different proxy systems.

Real-world deployment

Gui-Owl supports rich platform-specific action space. On mobile devices, this includes clicks, long pressure, refresh, text entries, system buttons (after, home, etc.), and launching the app. On the desktop, actions include mouse movement, clicking, dragging, scrolling, keyboard input, and application-specific commands. Actions are translated into low-level device commands (adb for Android, pyautogui for Desktop), making the framework easy to deploy in real-world environments.

The agent’s reasoning and decision-making process is transparent: for each step, it observes the screen, reviews the compressed history, about the reasons for the next operation, summarizes its intentions and executes it. This clear intermediate reasoning not only improves robustness, but also integrates into a larger multi-agent system where different “roles” (e.g., planners, executors, critics) can focus and collaborate.

Conclusion: Going towards a universal GUI proxy

GUI-OWL and Mobile Agent-V3 represents a major leap towards universal, autonomous GUI agents. By unifying perception, foundation, reasoning and action, and building a scalable self-improvement training pipeline, the research team has achieved State-of-the-art performance in mobile and desktop environments surpasses even the largest proprietary models in key benchmarks.

Check Paper and Github page. Check out ours anytime Tutorials, codes and notebooks for github pages. Also, please stay tuned for us twitter And don’t forget to join us 100K+ ml reddit And subscribe Our newsletter.

Asif Razzaq is CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, ASIF is committed to harnessing the potential of artificial intelligence to achieve social benefits. His recent effort is to launch Marktechpost, an artificial intelligence media platform that has an in-depth coverage of machine learning and deep learning news that can sound both technically, both through technical voices and be understood by a wide audience. The platform has over 2 million views per month, demonstrating its popularity among its audience.